What is the robots.txt file: A simple guide to how it works

Sections

A robots.txt file is a simple text file living on your website that gives search engine crawlers a set of instructions. It tells them which pages or files they can look at and which ones they should skip.

Think of it as a friendly guide for bots like Googlebot, helping them navigate your site efficiently. It’s one of the most fundamental tools in technical SEO for managing how search engines crawl and understand your content.

#Your Website’s Digital Traffic Controller

Imagine your website is a huge museum, and search engine crawlers are visitors trying to see everything. The robots.txt file is like the museum map handed out at the entrance. It points visitors to the public exhibits (your important pages) while marking staff-only areas (like admin sections or test pages) as off-limits.

Without this map, the crawlers would wander aimlessly, spending just as much time in the storage closets as they do in the main halls. This guidance is critical because it helps you manage your crawl budget - the finite amount of resources a search engine will spend crawling your site. By blocking unimportant pages, you make sure bots focus their attention on the content you actually want to rank.

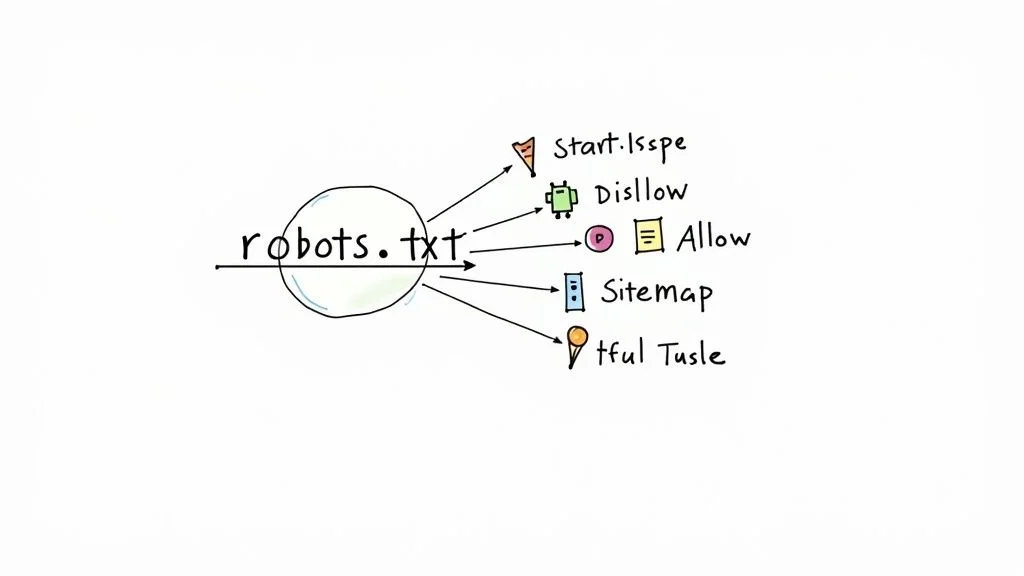

To give you a quick overview, here are the core functions of a robots.txt file.

#Robots.txt at a Glance

| Attribute | Description |

|---|---|

| **Purpose** | To provide instructions to web crawlers (bots) about which parts of a website they should not access. |

| **Location** | Must be placed in the root directory of your domain (e.g., `yourwebsite.com/robots.txt`). |

| **Format** | A plain text file (`.txt`) following a specific syntax. |

| **Function** | Manages crawl traffic to prevent server overload and guide bots to important content. |

| **Key Directives** | `User-agent`, `Disallow`, `Allow`, `Sitemap`, `Crawl-delay`. |

| **SEO Impact** | Helps improve crawl efficiency and ensures search engines focus on high-value pages. |

This simple file plays a surprisingly big role in your site’s overall health and visibility.

#Why Robots.txt is Essential for SEO

A well-configured robots.txt file isn’t just a gatekeeper; it’s a strategic tool that directly impacts your site’s performance.

-

Preventing Server Overload: By restricting aggressive bots, you can reduce the strain on your server, making sure your site stays fast and responsive for human visitors.

-

Improving Crawl Efficiency: You can tell crawlers to ignore things like pages generated by search filters or tracking parameters. This helps them find and index your unique, high-value content much faster.

-

Keeping Private Areas Private: It’s an easy way to keep sensitive areas like login pages, user profiles, or development folders from being crawled and possibly showing up in search results.

The core purpose of robots.txt isn’t to build an impenetrable fortress. It’s about creating an efficient roadmap for crawlers. Think of it as communication, not absolute security.

The standard for this file has a pretty practical origin story. It was developed back in 1994 after a web crawler accidentally crashed a server. This incident highlighted the need for a simple way to give instructions to bots, and the robots.txt protocol quickly became the standard adopted by all major search engines.

#The Evolving Role of Bot Management

Today, the idea of giving instructions to automated systems is expanding. You can see a similar concept in the emerging Llms.txt file, which aims to provide directions for large language models that interact with web content.

While it’s still new, it shows that the foundational idea behind robots.txt - creating clear, machine-readable instructions - is more relevant than ever as new kinds of bots come online.

#How to Read a Robots.txt File

Popping open a robots.txt file for the first time can feel like staring at a foreign language. But don’t let it fool you. It’s built on a few simple, plain-English commands called directives. Learning this syntax is the key to understanding exactly what a website is telling search engines.

Think of it as leaving a set of notes for different visitors. Each instruction starts by naming the visitor (the bot) and then lays out the ground rules for their visit. It’s a surprisingly straightforward system once you know the vocabulary.

#The Core Vocabulary: User-Agent

The User-agent directive is always the first line in any rule set. It’s the “To:” field on a memo, specifying which web crawler the rules apply to. You’re essentially saying, “Hey, [Bot Name], listen up - this next part is for you.”

Every search engine has its own specific user-agent. For example:

-

User-agent: Googlebot (This targets Google’s main crawler.)

-

User-agent: Bingbot (This one’s for Microsoft’s Bing.)

But what if you want to give the same instructions to every bot that comes knocking? That’s where the wildcard - an asterisk (*) - comes in handy.

User-agent: *

This line simply means “These rules apply to everyone.” It’s a clean, efficient way to set a general policy for your entire site.

#Giving Commands: Disallow and Allow

Once you’ve addressed the right bot, it’s time to give it instructions using the Disallow and Allow directives. This is where the real action happens.

The Disallow directive is your digital “Keep Out” sign. It tells the bot which folders or files it isn’t allowed to crawl. The path you list after the colon is always relative to your main domain.

Let’s say you want to block every crawler from your admin login page. Your instruction would look like this:

User-agent: *Disallow: /admin-login/

On the flip side, the Allow directive acts as a special hall pass. It lets a crawler access a specific file or subfolder even if the parent folder is disallowed. This is perfect for creating specific exceptions to broad rules.

Imagine you’ve blocked your entire /media/ directory, but you need Googlebot to see a specific PDF report inside it. You’d combine both directives:

User-agent: GooglebotDisallow: /media/Allow: /media/public-report.pdf

Here, we’re telling Googlebot to stay out of the /media/ folder except for that one important file. It’s a simple but powerful way to control access.

#Providing a Map with the Sitemap Directive

While Disallow and Allow tell bots where not to go, the Sitemap directive helps them find everything you want them to see. Think of it as handing them a map to your site’s most important pages.

An XML sitemap is essentially a treasure map for search engines. It gives them a complete list of all the valuable content you want them to find and index, making their job much quicker and more reliable.

The syntax couldn’t be simpler:

Sitemap: https://www.yourwebsite.com/sitemap.xml

By adding this line (usually at the top or bottom of the file), you give crawlers a perfect starting point. It’s a simple best practice that ensures none of your key pages get missed, even if you’ve already submitted your sitemap through a tool like Google Search Console.

Getting the syntax right is crucial. A 2017 analysis of over one million robots.txt files found that while most sites used basic directives correctly, more advanced commands were often a mess. This kind of inconsistency can cause crawlers to behave in unpredictable ways. You can discover more about these findings and the importance of correct syntax to avoid common pitfalls.

Once you’ve mastered these core directives, you can confidently read almost any robots.txt file and know exactly what instructions are being given to search engines.

#Getting Your Hands Dirty: Creating and Uploading Your First Robots.txt File

Alright, let’s move from theory to action. The good news is that making a robots.txt file doesn’t involve any complicated code or fancy software. In fact, you’ve already got everything you need right on your computer: a basic text editor.

The whole point is to keep it simple. The file needs to be plain text so that any web crawler, no matter how basic, can read and understand it instantly. This means you have to stay away from word processors like Microsoft Word or Google Docs. They sneak in hidden formatting code that will completely scramble the file for a bot, making it useless.

Your best friends for this job are Notepad on Windows or TextEdit on a Mac. Just a quick heads-up for Mac users: make sure you switch TextEdit to “plain text” mode (you can find it under Format > Make Plain Text) to strip out any rich text formatting.

#Step-by-Step: Let’s Build the File

Creating your first file is a breeze. The most important part is having a clear game plan for what you want to block or allow before you start typing, so you don’t accidentally tell Google to ignore your most important pages.

-

Open a New Document: Fire up Notepad or a plain-text-ready TextEdit.

-

Write Your Rules: Start laying down your directives. A solid starting point for most websites is to point to your sitemap and then block off any sensitive or unnecessary areas. A simple file might look like this:

User-agent: *Disallow: /wp-admin/Disallow: /cart/Sitemap: https://yourwebsite.com/sitemap.xml -

Save It Correctly (This is HUGE): This is the one step you absolutely cannot get wrong. The file must be saved with the exact name robots.txt. Everything has to be lowercase, with no funny business or extra characters.

Crucial Tip: The filename is not a suggestion. A file named Robots.txt or robot.txt might as well not exist. Crawlers will completely ignore it. It has to be robots.txt.

#Where Does This File Live?

Once you’ve saved your masterpiece, it needs to go in the right spot on your website’s server. Search engines are programmed to look for this file in only one specific location: the root directory of your domain.

That means the file has to be live and accessible at https://yourwebsite.com/robots.txt. If you stick it in a subfolder like /blog/ or /public/, it’s invisible. You can usually get it there in one of two ways:

-

Using an FTP Client: Tools like FileZilla let you connect directly to your server and just drag and drop the file into the main public folder (often called

public_htmlorwww). -

Through Your Hosting Control Panel: Most web hosts have a File Manager tool inside their cPanel or dashboard. This lets you upload the file straight to the root directory from your web browser.

Getting the location right is non-negotiable. This is especially critical during big website projects; as our complete guide to domain migration SEO explains, simple file misconfigurations like this can wreak havoc on your rankings during a move.

#The Easy Way: Using a CMS or SEO Plugin

If you’re running your site on a platform like WordPress, things get even simpler. Most of the big-name SEO plugins have a built-in editor that handles the creation and placement of the robots.txt file for you.

-

Yoast SEO: Just head to Tools > File editor.

-

Rank Math: You’ll find it under General Settings > Edit robots.txt.

These tools create a “virtual” robots.txt file. This means you won’t actually see a physical file sitting in your root directory if you connect via FTP. But don’t worry - it works exactly the same way and allows you to add or change rules right from your WordPress dashboard, no server access needed.

#How to Test and Validate Your Robots Txt File

So you’ve created your robots.txt file. That’s a huge step, but don’t celebrate just yet. A single typo - an extra slash or a misspelled directive - can have disastrous consequences, like accidentally blocking your entire website from Google.

This is why testing isn’t just a good idea; it’s absolutely essential. Think of it like proofreading a critical email before hitting “send.” You have to be certain the recipient will understand your instructions exactly as you meant them. Luckily, you don’t have to guess.

#Your Go-To Tool: The Google Search Console Tester

The best place to start is with Google’s own Robots.txt Tester, a core feature inside Google Search Console. This tool is gold because it reads your file just like Googlebot would, giving you a direct preview of how your rules will be interpreted.

With the tester, you can knock out three critical tasks:

-

Spot Syntax Errors: It instantly flags any lines that don’t follow the right format, like spelling mistakes (

Disalowinstead ofDisallow) or structural problems. -

Check for Logical Conflicts: The tool helps you find confusing rules, like when you have

AllowandDisallowdirectives fighting over the same URL. -

Test Specific URLs: This is the best part. You can plug in any URL from your site and see instantly if it’s blocked or allowed for Googlebot, taking all the guesswork out of the equation.

This free tool gives you the confidence that your instructions are clear, correct, and ready for crawlers. If you’re new to the platform, our guide on how to use Google Search Console will help you get set up and find the tester in no time.

#Step-by-Step Validation Process

Validating your file is thankfully a straightforward process. You can even test a draft of your robots.txt content before it ever goes live on your site.

-

Access the Tester: Head over to the Robots.txt Tester in your Google Search Console property.

-

Paste Your Code: Just copy the rules from your text file and paste them right into the editor.

-

Check for Errors: The tool will automatically flag any syntax errors or logical warnings, usually by highlighting them in red or orange.

Key Insight: The Robots.txt Tester isn’t just for checking your live file. It’s a sandbox where you can safely experiment with new rules and see their impact without affecting your site’s real-world crawlability.

Once you’ve cleared any initial errors, the next step is to test specific page URLs. This is crucial for making sure your rules are actually working as you intended.

For instance, you should test:

-

A URL you want to allow: Like your homepage or an important blog post. The tool should give you a green “Allowed” status.

-

A URL you want to block: Such as your

/wp-admin/login page. This should come back with a red “Blocked” status. -

A URL with an exception: If you blocked a whole folder but allowed one specific file inside it, test that file’s URL to confirm the

Allowrule is being correctly prioritized.

By catching issues early, you ensure that search engines can crawl your valuable content efficiently while staying away from the areas you’ve marked as off-limits. This simple checkup prevents costly SEO mistakes and keeps your site’s relationship with crawlers healthy and predictable.

#Strategic Uses for Robots Txt in SEO

Think of your robots.txt file as more than just a bouncer at the door. When used correctly, it’s one of the most powerful tools in your technical SEO arsenal. Going beyond simple “allow” or “disallow” commands lets you become an expert traffic controller for your entire website, guiding search engine crawlers with surgical precision.

This is all about making smart, proactive decisions to protect your brand, manage your crawl budget, and prevent search engines from getting confused. A well-crafted robots.txt file solves common SEO headaches before they even begin, ensuring that crawlers spend their valuable time on the content that actually drives your business. To really get the most out of this, a solid foundation in Search Engine Optimization (SEO) principles is key.

#Shielding Development and Staging Sites

One of the most essential uses for robots.txt is keeping your test environments completely invisible. When your team is building a new design or feature on a staging server (like staging.yourwebsite.com), the absolute last thing you want is for Google to stumble upon it, crawl it, and slap it into the search results.

This mistake can cause a mess - from duplicate content issues to potential customers seeing a broken, unfinished version of your site. The fix is a simple but non-negotiable rule in your staging site’s robots.txt file:

User-agent: *Disallow: /

This straightforward command tells every bot that shows up to turn right back around. It’s a foolproof way to make the entire staging site invisible to search engines, ensuring only the polished, final version of your website ever sees the light of day.

#Managing Crawl Budget on Large Websites

If you run a massive e-commerce site or a publication with tens of thousands of pages, crawl budget isn’t just a buzzword - it’s a finite resource. Search engines only have so much energy to spend crawling your site. If they waste that energy on thousands of low-value, duplicate pages created by faceted navigation (like filtering products by color, then size, then price), they might run out of steam before they even reach your most important pages.

You can use robots.txt to block these parameter-heavy URLs and guide crawlers away from the rabbit holes. A rule like this, for example, can stop bots from getting lost in endless filter combinations:

User-agent: *Disallow: /*?filter_color=Disallow: /*?filter_price=

By blocking these dynamically generated pages, you funnel your crawl budget straight to the unique, valuable content you want to rank. A great way to fine-tune this is by digging into your server logs to see where bots are actually spending their time. If you want to get your hands dirty with the data, learning to analyze log files to improve your SEO is a game-changer.

#Robots.txt vs Noindex: The Critical Difference

This is easily one of the most misunderstood concepts in technical SEO, and getting it wrong can cause major problems. While both tools help control how search engines interact with your content, they do completely different jobs.

Blocking with robots.txt tells a crawler, “Don’t come in this room.“Using a noindex tag tells a crawler, “Come in, but don’t tell anyone this room exists.”

A robots.txt Disallow is like a locked door. The crawler knows a page is there but can’t get inside to see the content. A noindex tag, on the other hand, is an instruction inside the room. The crawler has to enter the room (crawl the page) to see the sign telling it not to add the page to its public index.

#Robots.txt Disallow vs Noindex Tag

This table breaks down the key differences to help you choose the right tool for the job.

| Feature | Robots.txt Disallow | Noindex Meta Tag |

|---|---|---|

| **Action** | Prevents crawling of a page or directory. | Prevents indexing of a specific page. |

| **How It Works** | Blocks access to the URL entirely. The crawler never sees the page's content. | The crawler must visit the page to read the `noindex` instruction in the HTML. |

| **Result** | The page might still show up in search results (if linked from elsewhere), often with a note like "A description for this result is not available." | The page is removed from or never added to the search index. |

| **Best For** | Blocking low-value sections like admin areas or managing crawl budget for filtered URLs. | Removing specific pages (like thin content or thank-you pages) from search results. |

Here’s the crucial takeaway: to get a page de-indexed, you must allow Google to crawl it. If you block a URL in robots.txt and add a noindex tag to the page, Google will never see the noindex command because it can’t get past the locked door. The page could remain stuck in the index indefinitely. Choosing the right directive is everything.

#Common Questions About robots.txt

Once you start working with robots.txt, you’ll quickly run into some common “what if” scenarios. This section is all about tackling those frequent points of confusion head-on. Think of it as your go-to FAQ for clearing up misconceptions and getting straight answers.

Getting these details right is the difference between simply having a robots.txt file and actually mastering it to avoid costly mistakes.

#Will Blocking a Page in Robots Txt Remove It from Google?

This is probably the biggest and most important myth to bust: No, blocking a page in robots.txt will not get it removed from Google’s index. All this file does is tell Google not to crawl the page in the future; it doesn’t erase what’s already been indexed.

If a page is already indexed and has links pointing to it from other websites, it can absolutely still show up in search results. You’ve probably seen the result: a frustrating message that says, “A description for this result is not available because of this site’s robots.txt.”

To actually remove a page from the index, you need to use a noindex meta tag. Here’s the critical part: you have to allow Google to crawl the page one last time to see that noindex instruction. Blocking it in robots.txt is like locking the door before the movers can get the furniture out.

#Can I Use Robots Txt to Stop AI Data Scrapers?

Yes, you can, and it’s quickly becoming a standard move for site owners. You can specifically tell AI bots not to scrape your site for the purpose of training their language models. It’s as simple as adding rules for their unique user-agents.

For example, to block OpenAI’s crawler, you’d add this to your file:

User-agent: GPTBotDisallow: /

You can do the same for Google’s AI models by adding a rule for Google-Extended. This sends a clear signal that you don’t consent to your content being used for training data. While you can’t guarantee every bot will play by the rules, the major players like Google and OpenAI generally do.

#What Happens If I Don’t Have a Robots Txt File?

When a search crawler lands on your site and can’t find a robots.txt file, it makes a very simple assumption: everything is fair game. With no instructions to follow, bots will assume they have a green light to crawl every single page they can find.

For a tiny, simple website, this might not be a big deal.

But for most sites, it can cause real problems:

-

Wasted Crawl Budget: Bots might burn through their allotted time crawling low-value pages like internal search results, parameter-heavy URLs, or admin login screens.

-

Indexing Unwanted Pages: You could see private or irrelevant pages pop up in search results, which is never a good look.

-

Server Strain: Aggressive crawlers can put an unnecessary load on your server, potentially slowing the site down for your actual human visitors.

Even a basic robots.txt file is always a smart move.

#Can I Have Different Rules for Different Search Engines?

Absolutely. This is one of the most useful features of the robots.txt protocol. You can create tailored instructions for different crawlers by setting up separate rule blocks for each specific User-agent.

You might, for instance, want Bingbot to steer clear of a certain directory while giving Googlebot full access. Your file would just need separate rule sets to make that happen.

Here’s what that looks like in practice:

# Rules for GoogleUser-agent: GooglebotDisallow: /private-for-google/

# Rules for BingUser-agent: BingbotDisallow: /another-folder/

You can then add a catch-all rule using User-agent: * to set the default instructions for every other bot that comes knocking. This kind of granular control is perfect for fine-tuning your SEO strategy and managing how different search engines see your site.

Mastering technical SEO, including the strategic use of your robots.txt file, is fundamental to driving organic growth. At Rankdigger, we transform your Google Search Console data into actionable insights, helping you identify high-potential keywords and optimize your content with confidence. Explore how Rankdigger can help you maximize your visibility and turn searchers into customers.